The chances are that you’ve heard about ChatGPT, the general-purpose chatbot prototype that has taken the world by storm over the last few months. It has become a sensationalized trending topic across many industries, with predictions ranging from benign productivity enhancements to doomsday endgame scenarios (yes, I am looking at you, James Cameron and Wachowski sisters). But before diving into what is fact, what is fiction and what lies in between, we first need to understand what exactly ChatGPT is. To demonstrate ChatGPT in action, I’ve asked it questions related to various sections of this article and included them in context along the way (and note, I asked these AFTER I wrote each section).

The GPT in ChatGPT stands for Generative Pre-training Transformer, which is the name of the model architecture on which ChatGPT was trained. Generative models are a type of machine learning model that can generate new content, such as written text, images, or even spoken language. The “pre” in pre-training refers to training a model on a large dataset before refining the application to handle more specific, task-oriented objectives using smaller data sets. The “transformer” is an architecture that is a type of neural network (like a synthetic brain) that can be applied for Natural Language Processing (NLP) tasks, such as language translation or summarization of text.

Machine learning (ML) is at the core of the GPT architecture. In simplest terms, machine learning is a way to teach computers to learn from data without being explicitly programmed. Machine learning is a sub-field of Artificial Intelligence (AI) that uses many algorithms to identify patterns in data, learn from those patterns, and draw out insights and conclusions from the data. There are three main types of machine learning: supervised, unsupervised, and reinforcement.

Supervised learning is the most common. This is where the algorithm is trained on explicit or labeled data and desired outcomes and conclusions are presented alongside the input data. The algorithm then makes predictions on outcomes from new data sets that it has not yet seen based on what it has learned from the training data. Unsupervised learning is where the algorithm draws insights and outcomes on its own, without having any labeled or explicit data ahead of time. Reinforcement learning is where the algorithms have a feedback loop from the environment in which they are trained, typically referred to as penalties and rewards, ultimately helping the algorithm fine-tune its decision making.

While machine learning is a sub-field of AI, there are many others as well. The simplest way to describe the primary difference between machine learning and AI is that machine learning models are trained on data, can learn from it, predict outcomes, evolve, and change their decision making with more and new data sets. On the other hand, AI systems operate based on a set of pre-programmed rules and decision-making processes and do not adjust to new data.

ChatGPT was launched as a free preview in December 2022 by OpenAI. OpenAI launched as a non-profit in 2015, with a mission of, “[ensuring] that artificial general intelligence (AGI)—by which we mean highly autonomous systems that outperform humans at most economically valuable work—benefits all of humanity.” In 2019, the business evolved into a “capped for profit” business, meaning that investor returns would be capped at 100 times their investment. This was done with the belief that non-profits could not compete with for-profits, given the top talent would put their efforts into businesses where they could gain financially.

In 2020, GPT-3, which is the predecessor of the current ChatGPT, was launched. GPT-3 is a language model trained on large internet datasets to answer questions using natural language, but also to translate between languages and generate coherent, improvised text. In 2021, OpenAI developed DALL-E, which is a deep learning model that can generate digital images from natural language text inputs. These technologies were the precursors to ChatGPT, which is an AI chatbot derived from GPT-3.5. ChatGPT was released to publicly show the progress and applications of the technology. It took the world by storm, with over a million downloads in the first five days and an estimated 100M monthly active users in January, just two months after launch. Its meteoric rise has led to capacity issues; many people who have recently tried to access ChatGPT were notified accordingly when trying to access the site.

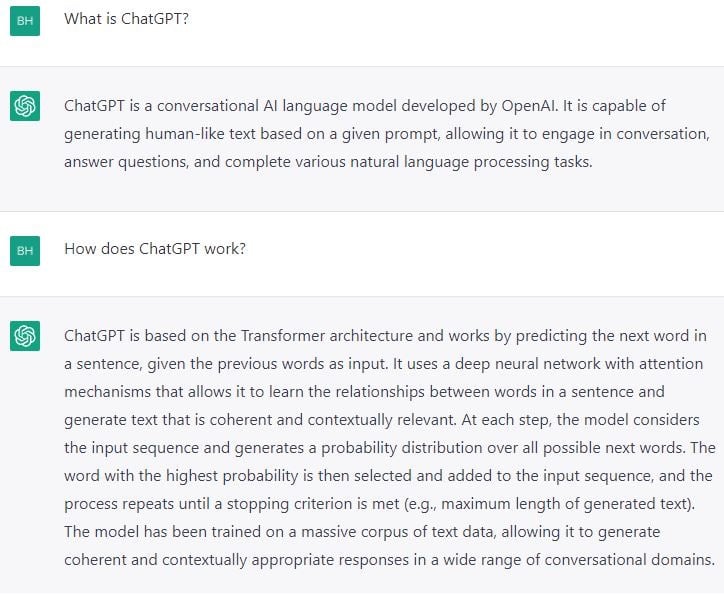

In ChatGPT's words...

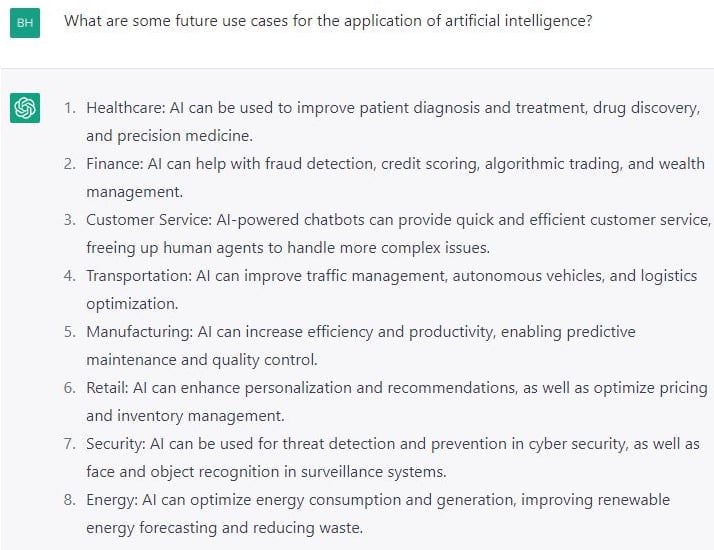

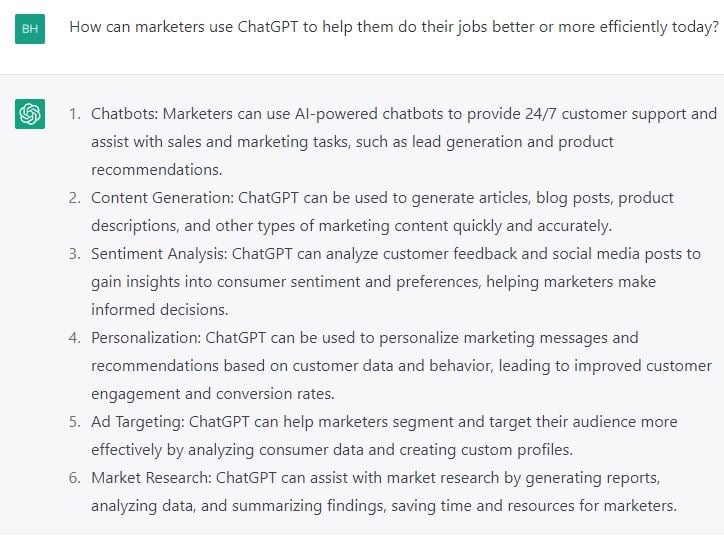

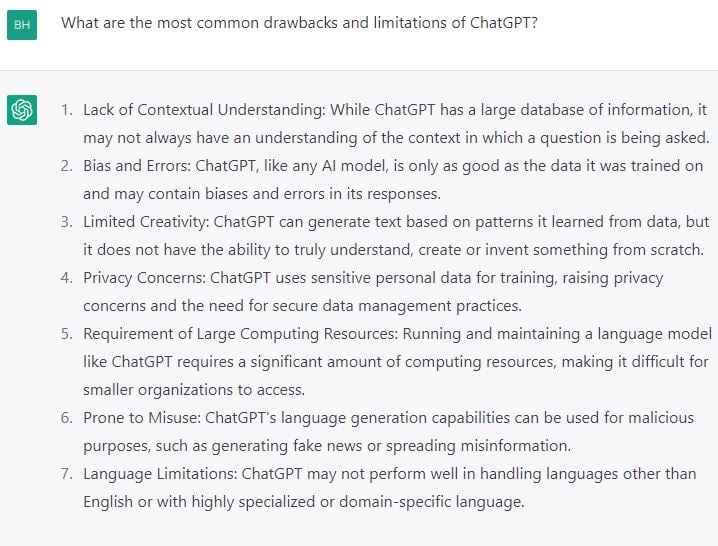

In ChatGPT's words...

Now that we know what ChatGPT is, it cannot be understated why it is garnering so much attention. While many of the underlying technologies in ChatGPT are not necessarily new and many have origins in foundational work done by organizations such as Google, DeepMind, or Meta, this is the first large scale, public demonstration where AI technology was put into the public’s hands for free use.

ChatGPT is a massive, innovative step in our technological evolution, on par with the public adoption and propagation of the Internet. We are just starting to scratch the surface of what can be done with AI. It is not farfetched to believe that the productivity gains that can be achieved by AI are comparable to productivity surges of the past driven by widely adopted technical innovations like electricity and the internal combustion engine. These technologies also relied on many complementary co-inventions like factory redesigns, infrastructure such as highways and railways, modern business processes, and new labor skills to fully reach their potential. These co-inventions took decades to fully materialize. A similar expectation should be set for AI.

Accordingly, we are at the dawn of a major step forward in productivity and I believe AI has the potential to be the next major productivity driver for humankind. Not only will AI spawn a new era of growth by augmenting human potential and replacing countless hours of research and authorship, but it will require entire new set up support structures around data structuring, governance, security, privacy, and software development. We already see the leading AI scientists earn the equivalent of NFL quarterbacks, reflecting the disparity between supply and demand for these valuable skills. What can a future that embraces AI look like? Some predictions include:

- Autonomous vehicles, networked together to prevent traffic jams, accidents, and pre-reserved parking spaces. Think of the potential time gained while commuting in a fully autonomous vehicle. This advancement would also likely lead to less vehicle ownership, as autonomous ride sharing services could take you anywhere on time with a simple advanced reservation.

- Chatbots will evolve from assistants that respond to inputs to agents who can make decisions and assist users based on their prior behaviors and data. Get curated shopping suggestions or airline deals on your favorite airline in the window seat that the bot knows you prefer.

- Financial assistance: AI in the financial industry could handle personalized investment portfolios or be used for fraud detection systems.

- Medical advancement improvements: Imagine cancer and drug research processes accelerated with AI-trained models, and the time that could be saved on testing and drug approvals. AI could also assist with monitoring human health for early disease detection.

- The development of precision farming techniques and the use of AI for crop monitoring and prediction could ease famine and increase food yields.

- Personal education: AI could provide customized learning plans and assistance with online education, helping to advance continued education studies.

While these examples are all plausible realities in the future, there are countless ways that ChatGPT can augment day-to-day work, spark new ideas, increase productivity, and improve efficiencies today. Here are some applications for ChatGPT that marketers can consider now:

- Customer Service: fast and accurate responses to customer inquiries could free up customer service agents to focus on critical and specialized tasks. ChatGPT can help improve long response times, dull and predictable chatbot responses, quality control for inappropriate agent behavior, and improve reliability.

- Content Creation: accelerate the speed of content delivery. Articles, emails, ads, and even poems can be developed with the help of AI. Additionally, companies can also use ChatGPT to help generate content ideas and topics and maintain a monthly content calendar for social media posts.

- Research and Content Curation: ChatGPT’s ability to research any topic online and select relevant content from various sources can help synthesize hours of research into curated summaries.

- Customer Engagement: engage customers on social media or offer discussion starters on a website’s blog or forum, improving a business’ online presence and customer engagement.

- New Hire Training: trainees could ask follow-up questions when they are uncertain on the new job. ChatGPT can provide straightforward answers to questions and can be used to initiate dynamic conversations, optimizing training methods using reinforcement learning.

- Coding: ChatGPT is useful for developers, as it can help them to write small pieces of code and debug that code effectively to remove any errors. Some basic web applications and web pages can be developed seamlessly by the AI.

- Lead Nurturing: nurture leads and drive them into a company’s sales pipeline. ChatGPT can recall previous user comments, context, times, and methods of communication and other data points and develop the best ways to communicate with each prospect.

- Keyword Research: assist search marketers with keyword research by identifying synonyms for root keywords and alternative ways to develop search phrases based on NLP and search volumes.

- Ad Copy Development: help automate the development of unique and well-written ad copy across hundreds of campaigns. ChatGPT can generate ideas for ad copy and lay out the structure of an ad to enhance productivity. In addition, ChatGPT can be used to develop email copy and subject lines.

- Naming / Titling: a good name, title, and/or headline are key to helping a piece of content rank well on search engines and drive CTRs. In addition, ChatGPT can help generate titles, helping to name blog articles, podcasts, and webinars.

- A/B Testing: ChatGPT can automate A/B testing experiments to uncover campaign insights and drive iterative performance improvements.

Now that we’ve discussed the promise of ChatGPT and identified practical applications, we must also understand that this is still an evolving technology, and as such, it does have limitations and drawbacks. Such limitations include the inability to answer questions that are worded in a specific way, as ChatGPT often requires rewording to understand the input question clearly.

A bigger limitation is a lack of quality in the responses that ChatGPT delivers. The responses can sometimes sound plausible but make no practical sense—or they can be excessively verbose. When combined, instead of asking for clarification on ambiguous questions, the model will guess at what your question means, which can lead to unintended responses to questions. While the answers given by ChatGPT typically look like they might be good answers and are provided by the bot with a high degree of confidence in tonality, the answers often are just words that sound good from a statistical point of view. The bot often cannot yet understand the meaning or discern if the answers given are correct. As a result, there is still a high inaccuracy rate for certain applications. ChatGPT itself says, "My responses are not intended to be taken as fact, and I always encourage people to verify any information they receive from me or any other source." OpenAI also notes that ChatGPT sometimes writes "plausible sounding but incorrect or nonsensical answers."

The unreliability of the responses has led the site StackOverflow to ban ChatGPT-generated responses to questions. Similarly, BuzzFeed and CNET also abandoned the hasty application of AI to write news stories because they were often riddled with errors. This has all led to a growing concern that the Internet and underlying stores of information will soon be tainted by this erroneous AI-generated content. It’s possible that we are now exiting the brief window where a good fraction of all human knowledge was searchable and readily available—a timeframe that started with the invention of the search engine and ended with the invention of large language models. There is a very real fear among AI researchers that the last large bodies of human written text have already been captured and that all future states of the Internet from which the text to learn and train models on will be contaminated by machine-speak.

Another drawback of ChatGPT is that the data corpuses used to train it only includes data up to 2021. This means that current events and any developments over the last year plus will not be included. While that should suffice for general and historical queries, 15% of Google searches each day are new, evidencing a need for fresh and current data.

Academia also has its concerns about the use of ChatGPT. There is worry that AI chatbots could replace or degrade human intelligence. The chatbot can write an entire essay in mere seconds, making it easier for students to cheat, and stunting the learning process of how to write properly. This has led some school districts to block access to ChatGPT. It can also take tests (goodbye SAT) and produce passable working drafts of PHD-level thesis (albeit with strong fact checking needed). In addition, experts have concerns that the technology could be abused for purposes such as carrying out scams, conducting cyberattacks, spreading misinformation, and enabling plagiarism.

AI has also had a historical problem with bias, including racial bias. In 2016, in less than a day of its public release, Microsoft had to take down its AI, Tay, after public users prompted the bot to, appallingly, call for a race war, suggest that Hitler was correct, and tweet “Jews did 9/11.” Yikes. Similarly, Meta defended its AI, Blenderbot, after it made racist comments, but then pulled down another AI tool, Galactica, after just three days. The reason? There was significant criticism over its inaccurate and sometimes biased summaries of scientific research. ChatGPT has not yet corrected the concerns around bias, as it too can generate discriminatory results and has demonstrated bias when it comes to minority groups. When the training data is biased, derivatively the model and outputs will also inherently be biased.

Which is a good segue into the moral imperative that we now face. We are on the precipice of a new era—the dawn of a future in which we can leverage AI to dramatically increase productivity and progress. But there are pitfalls and risks in not doing so responsibly. Some AI ethicists fear that the rush to bring AI advancements to market could expose billions of people to the risk potential before trust and safety experts have been able to assess and study the risks in full.

In a recent interview with StrictlyVC, the CEO of OpenAI, Sam Altman, was asked about the best-case and worst-case scenarios for AI. His best-case response was non-descript and utopian, but his worst-case scenario thoughts were frightening: "The bad case—and I think this is important to say—is, like, lights out for all of us." Altman continued, "I'm more worried about an accidental misuse case in the short term. So, I think it's like impossible to overstate the importance of AI safety and alignment work. I would like to see much, much more happening."

Furthermore, the percolating attention around ChatGPT is generating pressure inside Big Tech to move faster. The technology that is powering ChatGPT was based on the foundational developments of companies like Google and Meta, and it isn’t necessarily any better. But OpenAI’s public release of its language models has given it a real advantage in that it can now further train the models with millions of user inputs, reinforcement learning from humans. Microsoft’s strategic investment in OpenAI, and inclusion of ChatGPT to help power its Bing search engine, have put pressure on Google to accelerate its own AI releases. Google, which helped pioneer some of the technology underpinning ChatGPT, recently issued a “code red” around launching AI products and proposed a “green lane” to shorten the process of assessing and mitigating potential harms, according to a report in the New York Times. Shortly thereafter, Google announced the launch of BARD, its experimental, conversational AI chat service as a response to the popularity and potential threat imposed by ChatGPT’s inclusion in Bing. Exemplifying the concerns around accuracy, Google stock took a $100B bath when the public unveiling demonstrated an inaccurate result in its advertisement for Bard. Bard incorrectly stated that the James Webb Space Telescope took the very first pictures of exoplanets; those images were, in fact, taken in 2004 by the European Southern Observatory’s Very Large Telescope (and yes, that is the real name).

If mistakes of that magnitude can be made with the current state of AI, it does make one pause to consider what other potential problems could result from an “arms race” with differentiating AI technology. “The pace of progress in AI is incredibly fast, and we are always keeping an eye on making sure we have efficient review processes, but the priority is to make the right decisions, and release AI models and products that best serve our community,” said Joelle Pineau, managing director of Fundamental AI Research at Meta.

We have now opened “Pandora’s Bot.” The temptation and promise of a future in which AI powers a utopian state has proved too exciting to ignore. It is clear, that if done correctly and responsibly, we could unlock an exponential rise in human progress and productivity. However, there are clearly still many concerns and issues with which we need to contend. We cannot allow private interest to sidestep the safety measures necessary to ensure we proceed ethically and responsibly, nor can we allow that private interest to rush past the warning signals in pursuit of more market share or profits. It does sound unbelievable to imagine a world in which generative AI creates an existential threat for mankind, but it is not out of the realm of possibility. Oxford University researchers recently addressed the UK Parliament warning that advanced algos could eventually “kill everyone,” per Popular Mechanics. Governments may be motivated by individual nations’ ambitions to create and maintain an edge over other foreign powers.

This is an instance, similar to nuclear proliferation, where we must develop regulations to safeguard the power of the underlying technology. It is paramount that we responsibly govern the proliferation of AI technologies in a way that allows progress but does not ignore the very serious risks. AI is powerful enough to change the world for the better but could also lead to a true existential threat. It may be unrealistic, but I would like to see global alignment on how this is done. At the very least, I believe we can expect to see the EU and U.S. pass regulations. If what we learned from ungoverned social media taught us anything, we should know the dangers that unpoliced technologies, built upon unregulated data and privacy protections, can cause. I’m all but certain that the social sharing of AI generated content, deep fakes, as a notable example, will ensure that some regulation happens.

I would also suggest that we make it clear when AI is used to generate content, the way sponsored content is labeled in the digital media industry today. This will allow the end user to make their own determinations and to responsibly check underlying sources and fact-check should they chose to do so, especially before propagating the content further.

And ethical efforts are being made to ensure AI is propagated responsibly; organizations such as the World Benchmarking Alliance and Principles for Responsible Investing encourage the biggest AI developers to consider and be transparent about their policies. Strong AI investors also understand the delicate moment we are in and make their investments accordingly. Sarah Fay, who as Managing Director at Glasswing Ventures leads and evaluates investments in early-stage AI-powered companies reflected, “In the words of late media theorist, Neil Postman, ‘the greater the wonders of a technology, the greater will be its negative consequences.’ As an investor in AI technology, I am excited by generative AI development and will participate in its advancement. But I am also hyper conscious of the risks posed, and my firm has established boundaries that ensure the companies in which we invest are dedicated to ethical AI development and adhering to the Universal Guidelines of AI.”

AI is great at augmenting human thinking. It’s great at taking large data sets and running exponential scenarios at rates far faster than teams of humans could ever do. It’s also exceptional at taking repetitive tasks with clear rules for decisioning and replicating them at scale. And in safe, tested, validated applications, AI can be the most powerful tool mankind has ever seen. The internet and social connectivity are the shoulders on which AI stands today. They are the fabric from which AI will be made. AI can and will unlock a new golden age of productivity and growth, where the quality of human life can be improved and prolonged, and I believe that’s the future we’ll see. But to ensure that we achieve that future, we need to take the proper steps to govern that progress responsibly, and not cut corners for profits at the risk of mankind.

-1.png?width=300&name=MicrosoftTeams-image%20(1)-1.png)

COMMENTS